Go Back

The Localization Paradigm Shift: Traditional Tools vs. AI-Native Translation Workflows

This article provides a technical comparison between the traditional stack and modern AI-native workflows, outlining why the shift is essential for organizational scalability.

Posted on Feb 9, 2026

·

The demand for hyper-localized content is growing exponentially. Yet, many organizations are attempting to meet 2025 demands with 2015 tooling.

For decades, the localization industry relied on a stable stack: Computer-Assisted Translation (CAT) tools, translation memories (TM), and rigid rule-based or early neural machine translation engines. These tools were excellent for maintaining consistency but were fundamentally designed as databases, not reasoning engines.

Today, Generative AI and Large Language Models (LLMs) have entered the arena, not just as a "better Google Translate," but as a fundamental architectural shift in how we process cross-lingual content.

This article provides a technical comparison between the traditional stack and modern AI-native workflows, outlining why the shift is essential for organizational scalability.

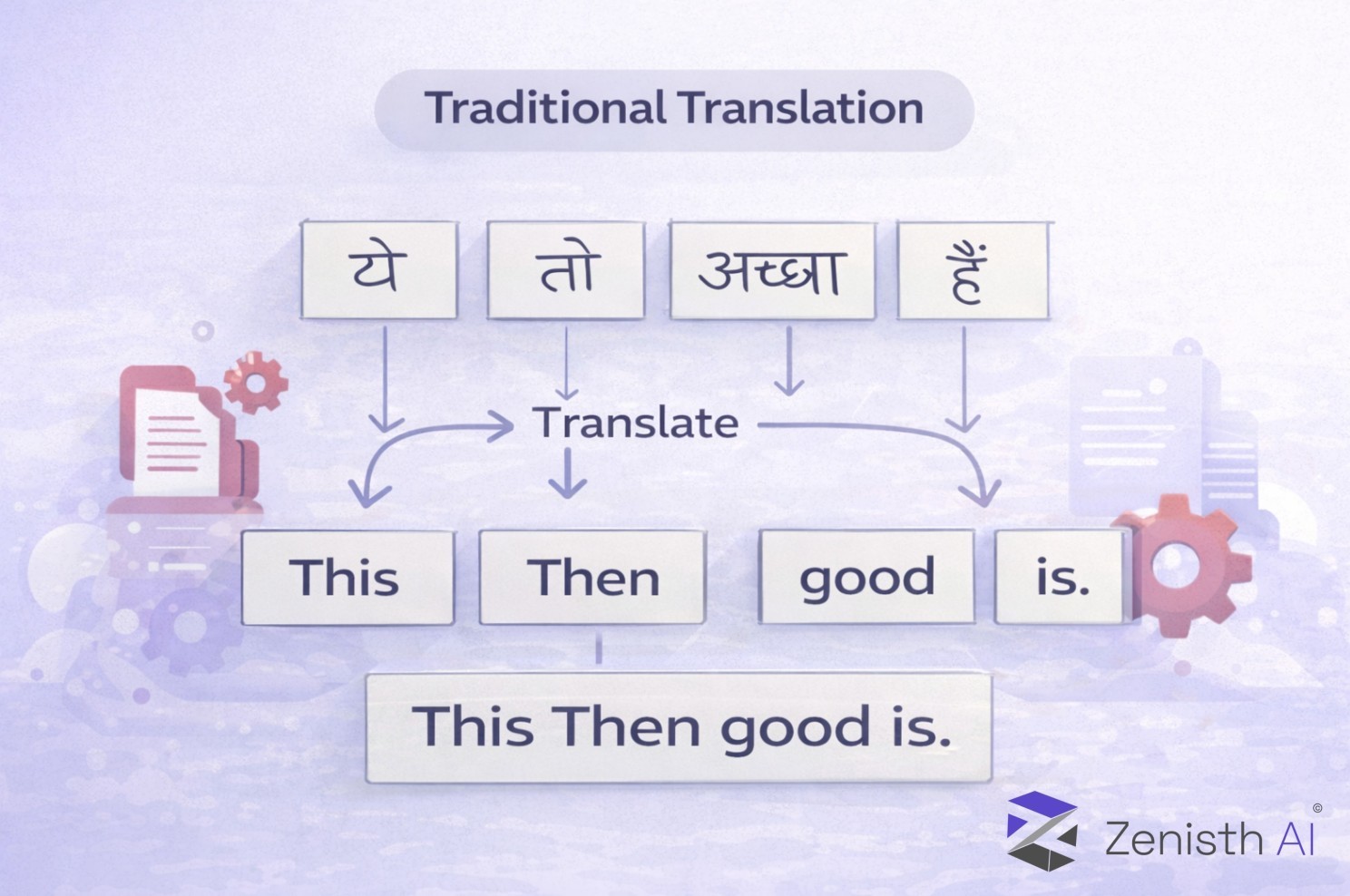

The Old Guard: The Limitations of Segment-Based Translation

Traditional translation technology is built on the unit of the "segment" (usually a sentence).

A CAT tool works by breaking a document into sentences and checking a database (TM) to see if that exact sentence has been translated before. If not, it sends it to a basic MT engine.

The Architectural Flaw: Because these tools process language sentence-by-sentence in isolation, they lack "inter-sentential context." They don't know that paragraph three contradicts paragraph one. They don't understand tone, sarcasm, or overarching brand voice.

The result is output that is often grammatically correct but functionally robotic, requiring significant human effort to "post-edit" into something natural.

The New Challenger: AI-Native and Agentic Workflows

AI-based translation moves beyond simple segment replacement. By utilizing LLMs with massive context windows, these systems analyze the entire document or even a whole project folder before translating a single word.

Crucially, modern AI workflows don't just rely on the model's raw training. They use techniques like Retrieval-Augmented Generation (RAG).

In an AI workflow, your old Translation Memories and Glossaries aren't obsolete; they become vector databases. The AI "retrieves" relevant past translations and terminology dynamically, using them as context to generate highly accurate, stylistically consistent new translations.

It is the difference between a tool that recalls past data and an agent that understands current intent.

Technical Showdown: Traditional vs. AI-Native

Here is how the architectures stack up across key technical criteria:

Feature Traditional Stack (CAT/NMT) AI-Native Stack (LLM/Agentic) Core Unit of Processing: The Segment (Sentence in isolation).The Context Window (Documents, paragraphs, or entire project history).

Glossary AdherenceRigid: Performs simple find-and-replace, often causing grammatical errors due to inflection.

Adaptive: Understands the term's meaning and integrates it naturally into the sentence structure.

Tone & StyleNon-Existent: Requires separate style guides that humans must manually apply.

Instruction-Based: Can be prompted via system instructions to adopt specific personas (e.g., "friendly marketing," "strict legal").

Handling AmbiguityPoor: Guesses based on statistical probability, often leading to literal translation errors.

High: Uses surrounding context to resolve ambiguous terms (e.g., knowing if "bank" means a financial institution or a river edge).

Workflow AutomationLinear: Linear pipelines with rigid handoffs (MT -> Human Editor -> Proofreader).

Agentic: Autonomous agents can have self-correction loops, perform quality estimation, and route tasks dynamically based on confidence scores.

The Organizational Benefits of Onboarding AI Translation

Moving to an AI-native translation stack is not just about getting a "slightly better draft." It fundamentally changes the economics and velocity of localization.

1. Scalability Through "Zero-Touch" Workflows

Traditional MT always requires human eyes because its mistakes are unpredictable. AI, when wrapped in proper guardrails and RAG systems, achieves a level of fluency that allows for "zero-touch" translation for lower-tier content (e.g., knowledge base articles, user comments), freeing up human experts for high-value creative assets.

2. Dynamic Adherence to Brand Voice

Instead of static style guides that nobody reads, AI systems can be encoded with your brand's voice. A marketing team can instruct the AI to "Translate this set of UI strings for a Gen Z audience in Germany, keeping it punchy and informal," and the LLM will adjust register and vocabulary accordingly, something traditional tools cannot do.

3. Turning "Post-Editing" into "Reviewing"

The psychological toll on linguists fixing robotic, segment-based MT output is high. AI output is generally more fluent and contextually aware. Linguists spend less time fixing basic grammar and more time ensuring cultural fit, shifting their role from "janitor" to "editor."

Conclusion

Traditional translation tools were designed for an era where data scarcity was the problem. Today, the problem is data volume and velocity.

Adopting AI-based translation isn't just an upgrade; it's necessary re-platforming to handle the speed of modern business. By moving from rigid, segment-based tools to context-aware AI workflows, organizations don't just translate faster; they communicate better.